Evolutionary Computation Classics - Vol. I

The inner core of this (somehow) difficult "endeavour" would be writing and reporting what the creators of those methods did to bring into life a new myriad of nature inspired algorithms which have setting up a whole new area of research, their insights and experiments that helped them to write their stories and, also, the history of a new field.

This post does not intend to claim one method is better (or even the best) than any other (a thunderous voice speaks: Thou Shalt Remember The NFL!), but just a humble try to understand what were the main ideas behind something new and that represented at its time an example of innovation, what could help contemporary researchers, engineers, achievers, innovators, and so on to build their own innovations. I think that the difficulties and necessities those pioneers faced and, more important, overcame are full of interesting lessons we may learn from and use them as a source of inspiration, or a manner to simply admire their works.

This first post deals with the pioneer work of three German students that performed early experiments on what would later develop into a new evolutionary algorithm: Evolution Strategies. Their first experiments happened during the Summer of 1964 at the Technische Universität Berlin (TUB, Technical University of Berlin). Below, the images of Ingo Rechenberg and Hans-Paul Schwefel.

Ingo Rechenberg (Der Bioniker):

Hans-Paul Schwefel (Der Evolutionsstratege):

The third student was Peter Bienert (it is a pity there is no picture of him on the Internet), responsible for an early evolvable hardware experiment: FORO 1 (FOrschungsROboter), the research robot.

When Ingo Rechenberg (born 1934) and Hans-Paul Schwefel (born 1940) were students at TUB, both of them had the opportunity to attend some lectures on the then growing field of cybernetics. Even though those lectures were not on a regular basis, they could listen to researchers such as Dr. Karl Steinbuch (1917-2005), a visionary who predicted the modern world of multimedia and an early designer of neural networks: The learnmatrix.

Professor Karl Steinbuch:

Another professor influenced them: Heinrich Hertel (1901-1982), a professor of aircraft construction and an instigating mentor who advised his pupils to look into nature as a source of inspiration for aerodynamics shapes and related subjects, and never missed a chance of comparing biological forms (dolphins, birds, fishes, and etc.) to the archetypical aerodynamical forms. It would be worthful noting that Schwefel almost became Eugen Sänger's (1905-1964) assistant, but Sänger died right before Schwefel accomplishing his diploma thesis. In such an environment, Rechenberg and Schwefel saw a close connection between cybernetics and any search strategy.

Professor Heinrich Hertel:

Professor Eugen Sänger and his wife, Irene Brendt:

The Silbervogel, a project by Sänger and Irene Brendt. This project was one of the designs considered for the Amerika-Bomber mission:

Another historical figure along that time that was a default influence for any engineer was, with no doubt, the German rocket engineer Wernher von Braun:

Norbert Wiener can be considered as an early pioneer of cybernetics, the field that gave birth to a whole new generation of scientific investigations:

His seminal book on the same subject:

That was the spirit of the time (or the Zeitgeist) in which the evolution strategies (ES) came to this world. It seems that was a fruitful age for new ideas...

The first meeting of Rechenberg and Schwefel at TUB was not in 1964, as some evolutionary computation insiders think, but in November 1963. Schwefel was a coworker newbie student at TUB's Hermann Föttinger-Institute for Hydrodynamics (HFI) and Rechenberg was a seasoned student at the same institute. Both were studying aerospace technology engineering and their respective duties had nothing to do with cybernetics, bionics, or optimization methods. Rechenberg, being a more experient member of HFI, could use the wind tunnel during the afternoons -- when the main research assistants had already left. They started investigating wall shear stress measurements, a subject Rechenberg had been doing for some time. Later, they decided to work on their own subject: Cybernetic Research Strategy, specially for improving (or optimizing) forms of slender bodies (wings, airfoils, airplane bodies, etc.) under air flow. Schwefel main work during this time was (and for which he was paid for) organizing exercises for other students, particularly on measurement techniques in fluid dynamics.

Below there are Ingo Rechenberg (background) and Hans-Paul Schwefel (foreground) at TUB's wind tunnel:

Despite at that time there were well established methods (mainly gradient-based ones, Gauss-Seidel and etc.) to deal with problems holding continuous variables, Rechenberg and Schwefel had found out that the traditional approach of changing one variable at a time and the discrete gradient method did not work in experimental optimization, what was the first focus of their research toward evolution strategies (ES). This impracticality of using those methods is due to the nature of the optimization that takes place in that kind of experiment: Instead of optimizing the parameters of a problem through a computer, the investigator must optimize the real object itself -- without computers!

Experimental optimization means that you have a real physical, mechanical, electrical, optical, chemical, etc. object or some model, like physical, of the real object -- and absolutely no computer -- and you want to alter some of its properties (size, charge, temperature, pressure, etc.) in order to achieve an improved performance according to some criterion (criteria). Before the end of the 1960s Ingo Rechenberg and Hans-Paul Schwefel did not think of using this procedure (an early form of evolution strategy, but without computers) as a numerical method. Computers were not yet available in larger numbers, and if so, their power was rather weak. Let alone noisy measurements and multimodality.

However, unlike traditional approaches of that time, changing all variables at the same time in some random fashion (smaller changes more frequently than larger ones) could work a little better. This was the seed of what would later be a new evolutionary algorithm.

At this point almost everything had already been set up for applying their new method. But another idea came from a somehow strange source... A science fiction story by a not so well known writer: Insel der Krebse (The Island of the Crabs), by Anatolij Dnjeprow. That story has to do with artificial and synthetic evolution ocurring in an island and made by synthetic organisms which mutate themselves to generate new ones. Rechenberg states that this story (also) was his inspiration for using a "population" (it was just a (1 + 1)-ES, that is, one parent generating one offspring and both, later, struggling for survival to become the parent in the next generation/iteration) instead of just one point of search, as it was/is the agenda of traditional methods at the time.

The front cover of Insel der Krebse:

Even though Insel der Krebse literarily covered some of the fundamental ideas (trial and error, mutation and struggling for life) of what would later be evolution strategies (ES), there were some methods at the time that had some, even though loosely, similarities with ES, such as George E. P. Box EVOP and the gradient method itself.

But trial and error experimentation with further selection of the best variatons (mutations) composed the core of what would be the (1 + 1)-ES (also known as The Survival of the Fittest or The Extinction of the Worst). First there is just one parent (μ = 1) generating, through random mutation, an offspring (λ = 1). Then, both of them struggles for survival and the best is taken as the parent of the next generation.

The basic skunk of evolution strategies was born with discrete binomially distributed mutations centered at the ancestor's position, and just one parent and one descendant per generation. Since there is only one parent the use of a recombination operator makes no sense. The absence of a recombination/crossover operator was due to the lack of, at TUB's Hermann Föttinger-Institute for Hydrodynamics (HFI), several objects (airfoils, kinked plates, nozzles, etc.) and several wind tunnels that could operate at same time. The reader should remember that the first evolution strategies experiments were not performed in computers. They were all about experimental optimization, using real world objects and optimizing directly into their features (charge, weight, drag, thrust and so on). Despite not using a recombination operator during early experimental optimization, some few years (1971) later, Rechenberg, in his PhD. dissertation, had demonstrated the advantage of such an evolutionary operator, although investigating the effects of crossover on a (μ + 1)-ES (extinction of the worst) and on a study concerning numerical optimization -- it was along this time he developed a simple self-adaptation mechanism to cope with numerical optimization (the so called 1/5 Success Rule). Moreover, doing some theory was much easier for mutation only. Therefore, this was done first. It is a common misconception in genetic algorithm community that evolution strategies emphasize mutation and neglect recombination, what is not true.

Now, having all the ingredients gathered, it was the crucial moment to test the concept of their new method. The Experimentum Crucis was done at TUB on June 12th, 1964: Six plane planks linked by five adjustable hinges. It is an aerodynamics problem and its optimum solution is trivial: All the plane planks must align horizontally (but the optimal configuration may change depending on the air flux direction). Before doing the experiment, Schwefel used a mechanical calculator with the sum of squares function on integers using binomial mutations to demonstrated the (1 + 1)-ES would work, at least in principle. The principal objective of that experimental optimization was minimizing the kinked plates' drag.

The Experimentum Crucis, an example of experimental optimization:

There are 515 (or 345 025 251) possible combinations for the five adjustable hinges.

The first series of tries on the experimentum crucis was made with random start conditions, later other initial conditions were taken into account, even turning out to be easier, requiring less mutations to reach a satisfactory solution, although the optimum solution was known and trivial. The idea behind using a problem holding a known solution was twofold:

A) Be able to prove convergence;

B) Demonstrate that the experimental method devised by Rechenberg and Schwefel did not take too much iterations to reach the optimum (or a satisfactory solution).

Some critics at the time predicted that such a method would need years or even millions of years to find a reasonable solution. Today it is not uncommon finding out people who claim on the grounds of pure random search when it comes to natural evolution.

The problem set up for the experimentum crucis' (experimental) optimization was finding the best reachable solution for the kinked plates' aerodynamic configuration, optimizing, along the way, some variables, mainly the drag.

That experiment can be considered as a sort of OneMax for an experimental optimization task. OneMax is a well known fitness function applied to retrieve the initial feedbacks from a new evolutionary algorithm and/or optimization method. That function is just a bit counting problem in which the algorithm must find the a binary string that has the maximum number of bits one. Therefor, if a binary string has length 5 (5 bits):

S1 = B1 B2 B3 B4 B5

Then, the best (optimum) solution in that OneMax problem is the string in which all tge bits Bn are 1:

Soptimum = 1 1 1 1 1

The core procedure to find the best solution is simply summing up each bit in the string.

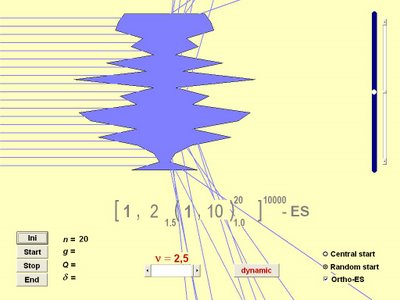

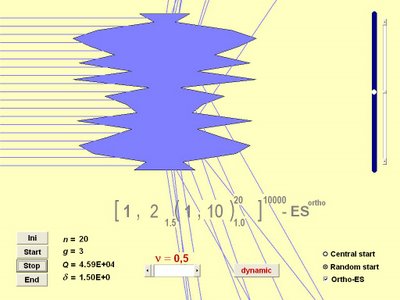

An OneMax optimized by an evolution strategy:

Rechenberg and Schwefel selected a simple problem (the kinked plates experimental optimization) because they could verify the initial behavior of their method during the optimization search and, thus, they could get the first impressions of their method. For solving the experimentum crucis problem, they used an early form of a simple (1 + 1)-ES with binomially distribution random changes in the current best kinked plates' shape. In a (1 + 1)-ES there is just one parent (μ = 1) generating a single offspring (λ = 1) through random mutation. The whole optimization was made without any computer aid and completely performed by hand. In the 1960s West Germany, computers were not available as they are today, let alone during that time computational power was weak. Not to mention the fact that Rechenberg and Schwefel did not think at all of using their method as a numerical optimization search technique until around 1970. The migration of the early evolution strategy from the experimental optimization realm to the numerical venue was Schwefel's "crazy" idea when he began looking for a competitive derivative-free numerical optimization method.

The mutation operator applied was some kind of children's playing chips with a plus sign on one side and a minus sign on the opposite side. In the simplest case with two chips the result could be:

1) ++ with probability 1/4 for changing a variable in positive direction, or;

2) -- also with probability 1/4 for changing a variable in negative direction, or;

3) +- (or -+) with probability 1/2 for no change.

With more chips one could of course produce broader binomial probability distributions. A Galton box was used too, but only for demonstration purposes (see the image below).

A Galton box and the experimentum crucis:

The amount of iteration (or generations) the simple two membered (1 + 1)-ES needed was not 200, but different amountd in two successive tries. The best solution found was not flat at all, because drag measurements were not exact enough to distinguish between what would be flat and nearly flat.

The experimental optimization's iterations for the experimentum crucis:

Schwefel's diploma thesis, finished in March 1965, revealed the simple two membered (1 + 1)-ES can get stuck in such a discrete environments and, henceforth, they decided to concentrate on continuous Gaussian mutations. For his diploma thesis, Schwefel simulated an evolution strategy on a ZUSE Z23 computer, a creation of the German computer pioneer Konrad Zuse. In the same year, another student, called H. J. Lichtfuß (Evolution eines Rohrkrümmers Diplomarbeit, Technische Universität Berlin, Deutschland, 1965), got good results optimizing bended pipes through evolution strategy made as an experimental optimization procedure.

Z23 computer data sheet:

The Z23 computer was applied on a wide range of areas, from contructional engineering to ballistics and electronics design. It was available with compilers for the German formula code and for ALGOL 60.

The Z23 console:

Konrad Zuse:

Schwefel programmed the first cotinuous and computer implemented evolution strategy in the Z23, writing the code in an assembly-like programming language named Freiburger Code. This language was designed for solving mathematical problems and in an easier manner than writing the code in pure machine language. He took 1000 random numbers from a book and stored them as an array. His continuous and computer implemented (1 + 1)-ES program was put on a punched tape. The objective (or fitness) function used was a simple two dimensional parabolic ridge with positive integer numbers for the two variables. He started his simulation on an evening and got the results the next morning.

When Schwefel got his results he got astonished about the patterns of failure the method revealed depending on the angle of inclination of that ridge and the pattern of the mutations (e.g. in the Moore neighborhood only) that method, the (1+1)-ES with binomially distributed mutations, could be stuck in solutions that even were not local optima. He found a theoretical explanation for that, finally, within his diploma thesis in 1965. Since he could easily see that the univariate (or Gauss-Seidel or one-variable-at-a-time or coordinate) strategy would have even more problems on the ridge and that problem would be too easy for a (continuous) gradient strategy, he did not test them explicitly.

Although the somehow good results Ingo Rechenberg and Hans-Paul Schwefel achieved using the simple (1 + 1)-ES, the German academic establishment at that time did not welcome their evolution strategy method in a good way. The establishment was, indeed, skeptical. Maybe, this reaction could be an expression of the 1960s optimization methods' Zeitgeist. Even there were optimization researchers claiming they did not need another search method except the gradient one (steepest ascent/descent). One of the main researchers at TUB's Hermann Föttinger-Institute for Hydrodynamics (HFI) went to the point of saying that "cybernetics as such will no longer be done at this institute". After this, Rechenberg and Schwefel had to come back to work in fluid dynamics or they would have to leave HFI and they decided to leave.

After getting away from HFI, Ingo Rechenberg started to investigate the theoretical aspects of the (1+ 1)-ES and Hans-Paul Schwefel got a job because he had married with his girlfriend, Ms. Antje Schulte-Sasse (now Ms. Antje Schwefel and the person behind the biennial report Blaues Heft) and got children some time after marrying. Then, Schwefel went to an industrial research facility at AEG (Allgemeine Elektrizitäts-Gesellschaft) and also maintened an academic connection with the Institute of Nuclear Technology of the Technical University of Berlin. At the AEG research laboratory, Klockgether and Schwefel made an interesting evolution strategy application: The optimization of a two-phase flashing nozzle. That was, again, made as an experimental optimization.

Klockgether and Schwefel needed about 200 generations (or iterations) to find a sub-optimal solution for such a problem and it was made 45 improvements on the nozzle initial configuration. This was made, again, without computers, since no simulation model was available at the time an experimental optimization had to be performed, using a so-called (1+1) Evolution Strategy. The nozzles were built of conical pieces such that no discontinuity within the internal shape was possible. In this way every nozzle shape could be represented by its overall length and the inside diameters at the borders between the segments (every 10mm). For technical reasons the incoming diameter of the first segment had to be 32mm and the smallest diameter was fixed to 6mm resulting in an convergent-divergent structure of the nozzles. It was one of the early uses of gene deletion and duplication as evolutionary operators.

The two-phase flashing nozzle set up:

Even today, it is not possible to calculate what happens within such a nozzle: thermodynamically far away from equilibrium, drag between slow water droplets and fast steam, three-dimensional turbulent boundary layer with liquid sub-layer, supersonic behind nozzle throat, and etc. As someone could ask himself, that design principle behind that nozzle was never applied to real rocket propulsion, because, to do this, someone would need to do the experiments once more for bigger rockets.

At the time, there were some arguments against that nozzle design, being the principal one the non-optimal shape achieved and the strange design method employed. The first part of this argument seems strange because there was no a priori optimal solution known for the nozzle problem and there is no way of stating a speficic shape is the optimum.

Below, the intial and final nozzle designs:

The two-phase flashing nozzle video:

Despite achieving good results employing the new-born evolution strategy method, Rechenberg and Schwefel needed research grants to continue their investigations, but could not get finnancial support because both of them lacked a PhD. degree at the time. Even though, they made a request for finnancial support, but it was denied. Later, they tried to convince their professors, Professor Helmcke (a biologist) and Professor Gast, to undersign a research proposal on evolution strategies and employ them as research assistants. But, there were some negative recommendations coming from peer reviewers that analysed the proposal and there was someone who disliked the term "evolution strategy", what made the "wanna be" research assistants guessing it was made by some creationist.

It was a pity the research proposal was denied, because Rechenberg and Schwefel were thinking of new features for their method: Bigger populations; theoretical analysis; new mutation operators; real-valued variables; new computer implementations; and the contruction of an automaton (FORO 1, by Peter Bienert) operating under the rules of their method. Those features were only investigated some years later along the 1970s and expanded throughout the next 20 years.

But it all makes sense when viewed under the light of how important publications were and are. Without them, it is almost impossible of obtaining research grants. The only "publication" they got was a brief article in the popular magazine Der Spiegel.

Below, Ingo Rechenberg and the Der Spiegel article:

On the role of publications, Schwefel states:

Since we were foolishly underestimating the role of publications and spent no time for that (perhaps, we would not have been successful in trying to send papers to journals or conferences -- we were students first and even later had no higher academic degrees like PhDs), the community did not hear/read about our work. Those who heard about reacted in different ways: Students in other faculties of TUB tried to imitate our ES. Most professors took our ideas for nonsense, except for two: a biologist and Prof. Gast.

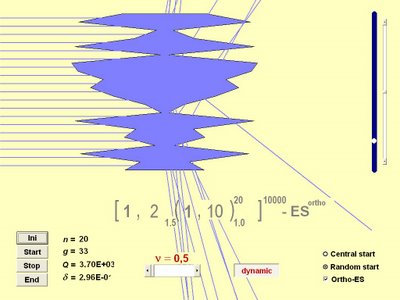

Ingo Rechenberg, Hans-Paul Schwefel and Peter Bienert would come back to work together, in the early 1970s, at the Institute of Measurement and Control Engineering. Schwefel's PhD. dissertation was not finished before 1974, but in the next year he got his PhD., while Rechenberg got his professorship at, ironically, Technical University of Berlin. It seems that Peter Bienert stayed at Rechenberg's new born group at TUB: Bionik und Evolutionstechnik. Schwefel's dissertation results demonstrated that the simple two membered (1 + 1)-ES behaved very similar to other local search methods, and he realized only multimemberd evolution strategy (an evolution strategy that has a population bigger than one or two) could do a satisfactory optimization job with some possitive probability.

Along the 1970s, Rechenberg began to more thoroughly study the theoretical aspects of evolution strategies. For this task, he used quite simple objective (or fitness) functions, such as the sphere model and the corridor. From these investigations, was born one of the early mechanisms to self-adapt the parameters of an evolution strategy: The so called 1/5 Success Rule.

The 1/5 rule states: If less than 20% of the generations are successful (i.e. offsprings better than parents), then decrease the step size for the next generation; if more than 20% are successful, then increase the step size in order to accelerate convergence.

It is an extremely simple rule and its robustness can be considered everything but weak. However, it was a fisrt step into the quest for a strong self-adaptation mechanism.

The cannonical self-adaptation mechanism was devised by Hans-Paul Schwefel in the second half of the 1970s. It uses a lognormal function and it is as follow:

The aforementioned self-adaptation mechanism distributes the Gaussian density function in the following way along the axes:

We can clearly see that the use of that mechanism spread the mutation distribution in a ellipsoidal fashion through the fitness landscape, but it can only follow directions that are aligned with the coordinate system and it has no capabilities for getting interdependence among objective (or fitness) function's variables. When dealing with problems of big dimensions, the ellipsoids become hyperellipsoids that can expand or contract along the axes directions only.

There is a simpler lognormal self-adaptation mechanism:

It also distributes the mutations in the same manner as the previous mechanism, but, instead of being ellipses, the shapes are circles:

It sees no interdependence among variables too and cannot expand or contract along the axes directions as the former mechanism does. For problems of high dimensions, the circles become hyperspheres.

The other mechanism is almost the same as those ones above, but this one can take into account the interdepence among the variables. It sees the interdepence through the calculation of the covariance matrix:

The alphas are rotation angles and the beta is a constant value chosen as 0.0873. Now, the mutation operator can spread an offspring along directions non-aligned with the coordinate system:

Now, there is an extra degree of freedom, since the ellipsoids now not only can contract and expand along axes directions, but they can also rotate toward directions that are not aligned with those axes.

Below, there is an example of a generic representation for an evolution strategy individual:

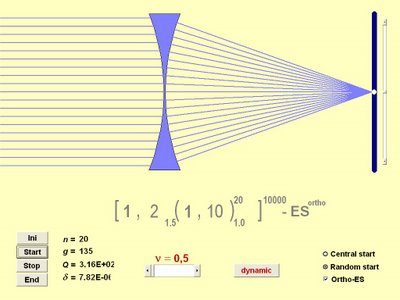

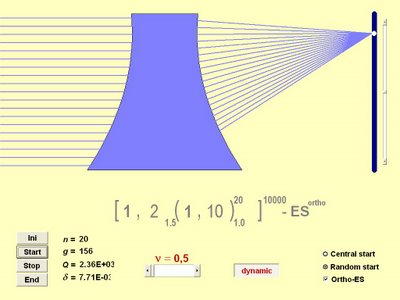

Those developments came from Schwefel's experiences with numerical optimization and he introduced in his PhD. thesis the well known evolution strategy nomenclature: The so called comma notation, (μ, λ)-ES; and the plus notation (μ + λ)-ES. Now, instead of using just one parent (μ = 1) and one offspring (λ = 1), there are μ parents generating λ offspring.

The main difference between the comma and the plus strategies has to do with the selection mechanism. The plus strategy works as follows: There are μ parents generating λ offspring and, then, both parents and offspring compose a temporary population from which the best μ individuals are selected to become parents in the next generation (or iteration). The comma strategy follows a similar fashion, that is, there are μ parents generating λ offspring too, but the selection takes place only in the offspring set from which the best μ individuals are selected to be parents in the next generation.

The plus strategy seems to work better when it comes to combinatorial problems and it can get stuck easily in local optima when performing continuous optimization. On the other hand, the comma approach is better suited to continuous/parametric optimization problems and one of its main advantages is exactly the selection mechanism which permits a temporary degradation of the best solution found so far to overcome local optima -- however, the comma approach can work poorly if ill configured, for example setting μ = λ, what is a simple random walk.

The inclusion of the crossover (or recombination) operator changed a little the "official" evolution strategy notation, adding a new parameter: The number of individuals involved in recombination, ρ. Then, the aforesaid notations would be: (μ/ρ, λ)-ES and (μ/ρ + λ)-ES, ρ can be set up to μ (ρ = μ), what permits multi-individual crossover.

There are two types of crossover: Intermediary and discrete. The first one is just a centroid calculation or the center of mass.

The intermediary crossover scheme:

The other scheme is a simple random selection following a coordinate wise procedure.

The discrete crossover scheme:

In a paper from 1995, Hans-Paul Schwefel and Günter Rudolph proposed what they called comtemporary evolution strategies and included another parameter into the notation: The lifespan, represented by κ. The new notation would be (μ/ρ, λ, κ)-ES. That new parameter is important because it would avoid a candidate solution of surviving forever and trapping the search in local optima.

Recently, the derandomization approach has been incorporated into evolution strategies, such as CMA-ES, but this is other long story.

Along the time Rechenberg and Schwefel were developing the evolution strategy, in the other side of Atlantic Ocean there was another community investigating a similar algorithm. The evolutionary computation communities in each side of the Atlantic Ocean would only meet in 1990, at PPSN I (Paralell Problem Solving from Nature, David Edward Goldberg helped to translate that conference name into English from the German Naturanaloge parallele Problemlösungs-Strategien). But there was an "unofficial" meeting before this date, in 1989 David E. Goldberg and Yuval Davidor met in Germany, what could be considered the first encounter of both communities. It is fair to say that when Rechenberg and Schwefel finished their respective PhD. dissertations, no one was aware of Professor John Holland's pioneer work on genetic algorithms, that was made almost concurrently with the German evolution strategies. The Germans even "missed" Holland's seminal book Adaptation in Natural and Artificial Systems when it was published for the first time, but, later, both of them got aware of Holland's work (Schwefel gave Holland's book as a birthday gift to Rechenberg). Around 1969-1970, Schwefel began to sistematically search for derivative free optimization methods and found interesting works that had, in essence, a similarity with evolutionary approaches:

Hans-Joachim Bremermann at Berkeley;

Lawrence .J. Fogel at San Diego;

Leonard A. Rastrigin at Riga (USSR);

J. Matyas in Czechoslovakia;

J. Born (Evolutionsstrategien zur numerischen Lösung von Adaptationsaufgaben, 1978) at Berlin, in the Eastern side of Berlin Wall;

G. Rappl (Konvergenzraten von Random Search Verfahren zur global Optimierung, 1984) at Munich.

Schwefel tried to contact some of those researchers. He first got into contact with Rastrigin, in Riga (USSR) and with Matyas in CSR -- Rastrigin even sent him a copy of a book (monograph) about a random search method he developed in which trials were done on the surface of a hypersphere. Some years later, Schwefel got into contact with Bremermann, but was unable to maintain any academic connection due to the lack of Bremermman's address. Schwefel got some papers from and a copy of Lawrence J. Fogel's book Artificial Intelligence Through Simulated Evolution.

There were no important evelution strategy developments between 1976 and 1988. Schwefel began his professorship at University of Dortmund (now Technical University of Dortmund) in 1985 and evolutionary algorithms were just a minor topic inside the main branch named systems analysis. The first student interested in evolutionary algorithms appeared only in 1989, after hearing of genetic algorithms. In the next decade (1990s), evolution strategy and evolutionary computation researchers focused their attentions to theoretical and complexity analysis of evolutionary algorithms and from those investigation have arisen the first steps to formalize evolutionary algorithms, despite disagreements between researchers. The 1990s also saw the emergence of a new evolutionary based approach: Estimation of Distribution Algorithms.

The kind of work Ingo Rechenberg, Hans-Paul Schwefel and Peter Bienert made in the 1960s can be considered an achievement that rarely happens during a lifetime. After 45 years of hard work it is very beautiful to see their work has helped to establish a whole new research area and all the results investigators all around the world (and, alas, other planets) have got.

Nowadays, Ingo Rechenberg is the head of Bionik & Evolutionstechnik group at Technischen Universität Berlin. Peter Bienert works with him there.

Hans-Paul Schwefel is now retired from the former systems analysis chair (now algorithm engineering) at University of Dortmund. He is an emeritus professor at Dortmund.

All models are wrong, but some of them are useful.

(G.E.P. Box)

Some Acknowledgements now:

I am very grateful to Professor Hans-Paul Schwefel, who made all the corrections in the previous text and also suggested some points to speak about. I thank Professor Schwefel, also, for replying me a very long list of questions (50!!! and another set concerning the 45 years of evolution strategies) about the first works in evolution strategies and for his patience to answer all the question set.

I would like to thank Ms. Antje Schwefel for delivering some texts of mine to her husband, Professor Schwefel, and, also, for replying me some questions about Chinese and Japanese languages. Thank you for translating my name into Chinese too.

Thank You, Professor David Edward Goldberg, for your correction about the "official" meeting of USA and German evolutionary computation communities.

Labels: Evolution Strategy, Evolutionary Algorithm, Evolutionary Computation, Genetic Algorithm, Hans-Paul Schwefel, History, Ingo Rechenberg, Old School, Peter Bienert, Pioneer